SURE Products

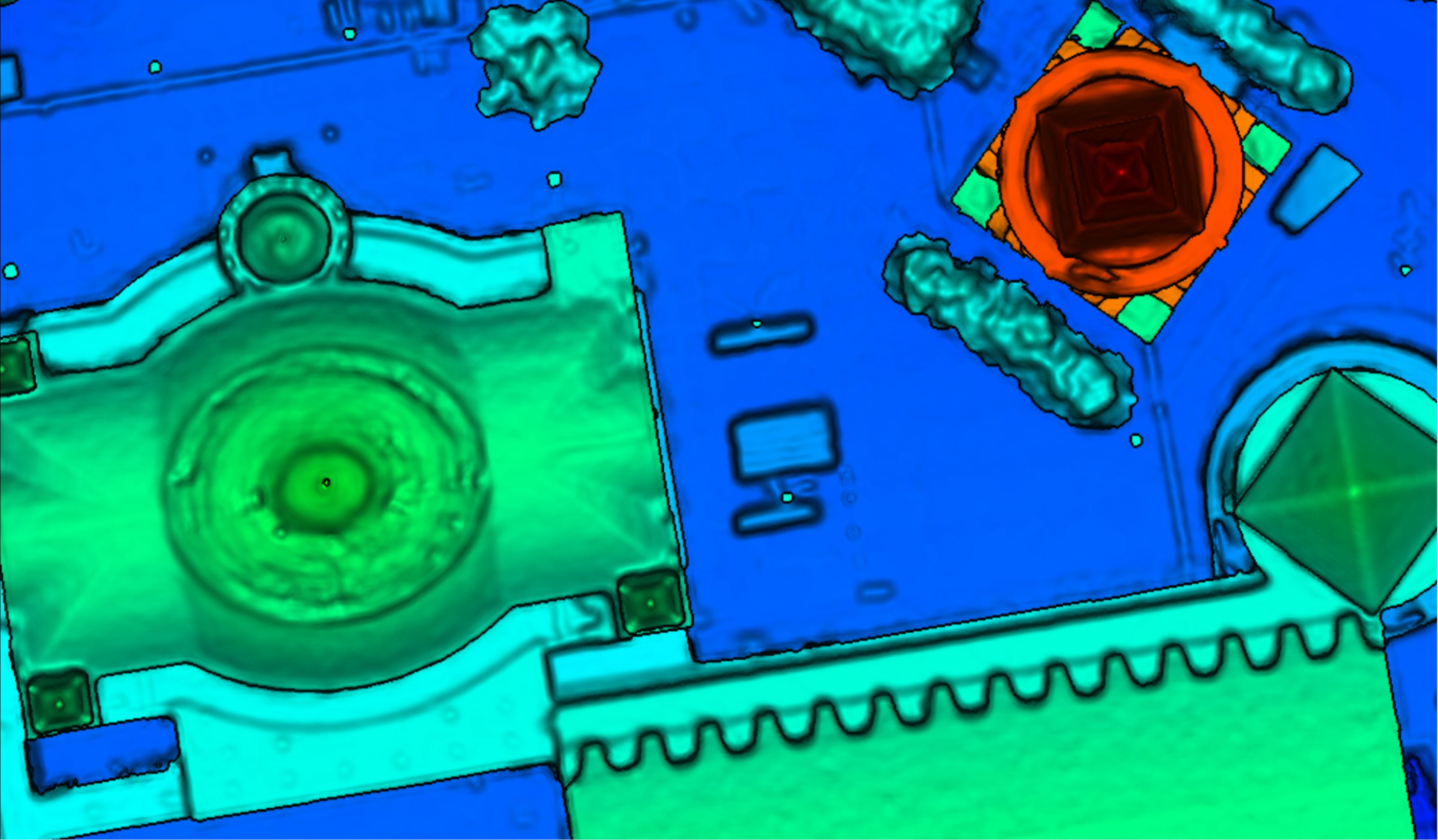

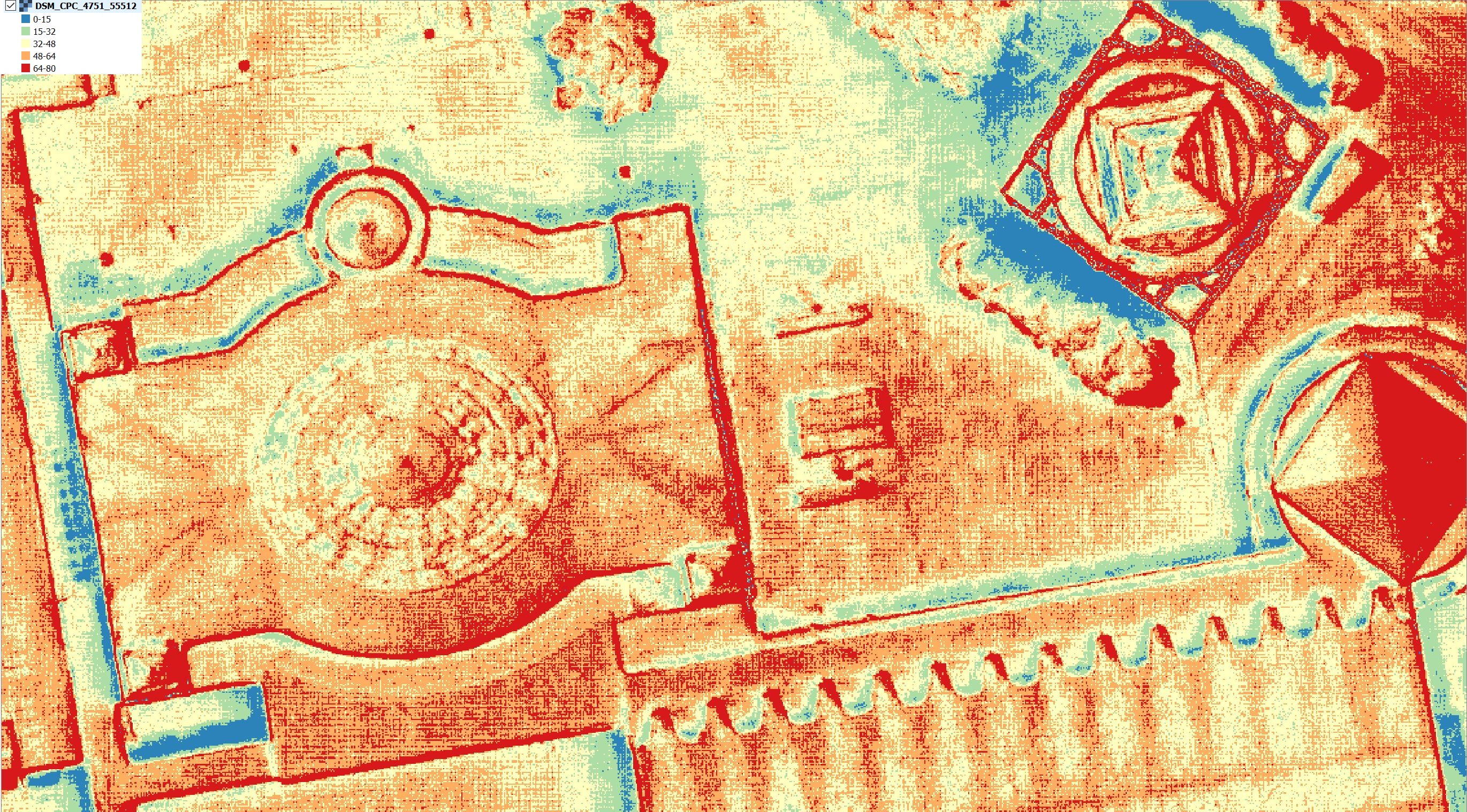

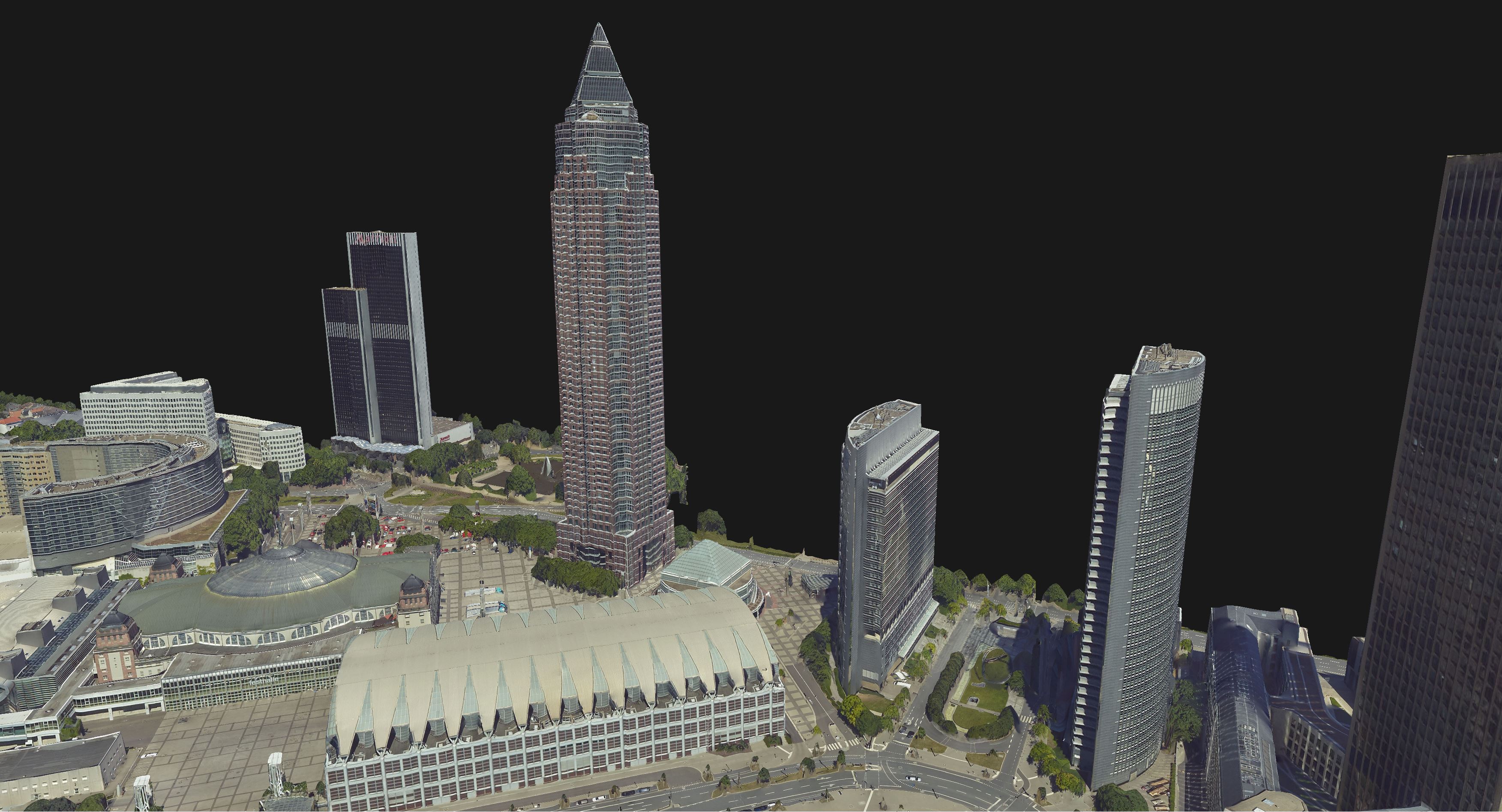

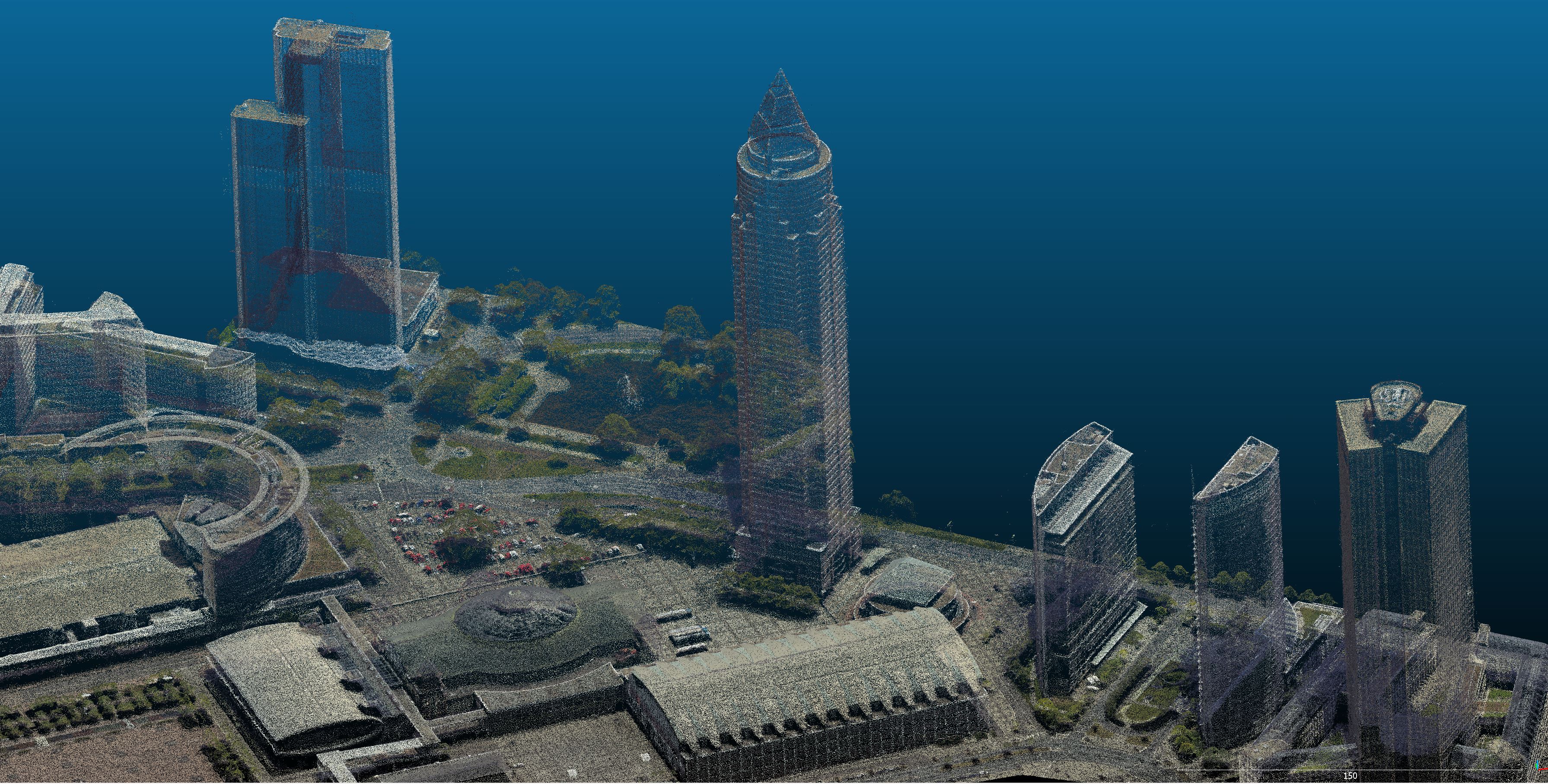

SURE uses state-of-the-art algorithms from the fields of Photogrammetry and Computer Vision to transform input images and/or LiDAR data into georeferenced Raster Products (DSM, True Ortho, etc.), Point Clouds and Meshes.

In order to allow for efficient and scalable processing, SURE divides the surface to be processed into tiles. Georeferencing information is added to each file if the exported format supports it (las, laz, tif/geotif) and a coordinate system is provided during the Project Setup.

Select any of the products below to learn more about them: